However, the effectiveness of limiting third-party political advertising depends on advertisers honestly and transparently identifying themselves, who they are or who they represent.

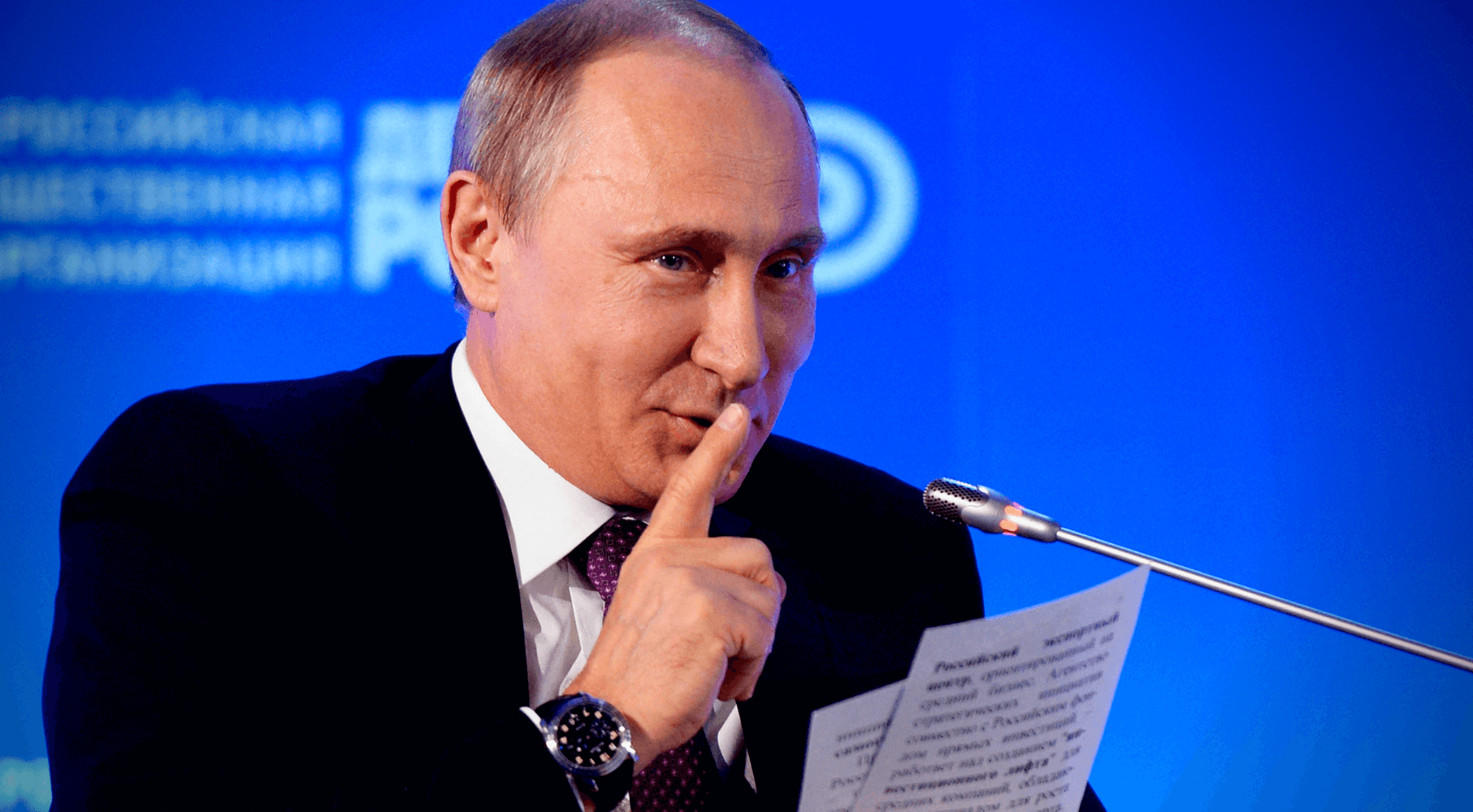

It would be hard to imagine that the trolls who operate out of the Kremlin’s internet Research Agency, for instance, are using the Kremlin credit card and billing address when they pay their social media advertising. The same could be said for all other nefarious governments that engage in information warfare and influence campaigns. In the U.S., Russian agents who produced and promoted disinformation stole U.S. identities and purchased U.S. servers to conceal their Russian identities.

If we wish to protect the integrity of our democracy and institutions from foreign subversion, a more comprehensive understanding and response is required.

Fundamental to this understanding is the recognition that the creation and distribution of false news and disinformation are aimed directly at undermining the trust Canadians place in our political leaders, media, institutions and each other.

Disinformation needs active monitoring and tracking. A disinformation warning system should be developed to alert government officials, media and the public as attacks are detected.

When hostile foreign governments wish to mask their efforts to interfere with Canadian politics they often do so through proxy organizations. Funding for these groups is distributed to these proxies through networks of NGOs. Such organizations claim to be grassroots community or business associations promoting trade. The members of these groups amplify government narratives within their communities and beyond. They might also directly attempt to influence government leaders, by organizing protests, letter writing campaigns, opinion pieces.

In some cases, foreign governments have paid for Canadian political leaders to visit their countries in order to influence them. The Communist Party of China engaged in this practice as recently as this past spring. Canada must ban such trips outright and demand complete disclosure of activities by those who have participated in them.

It is our addiction to social media and the internet, that exposes us to the greatest risk of disinformation. Using armies of human trolls and a global network of proxies, stories are developed, planted and amplified by them on social media. Artificial intelligence is then used to manipulate search and social media algorithms to blast you, me, our families, friends and every Canadian, with disinformation to distort our opinions, regardless of our existing political views.

We can begin curbing the negative impact of disinformation spread on social media platforms by holding them accountable for the content that their proprietary artificial intelligence systems are programmed to recommend to us.

To be effective Canada’s information defence strategy must recognize that it is the erosion of our international alliances and the disintegration of our democracy that is the ultimate target of our foreign adversaries.

The efforts of our adversaries will be total, and election interference is just one method that the regimes in Moscow, Beijing and Tehran will use to achieve their goal.

Marcus Kolga is a specialist on Russian disinformation and foreign policy. He is a senior fellow at the Macdonald-Laurier Institute’s Centre for Advancing Canada’s Interests Abroad.